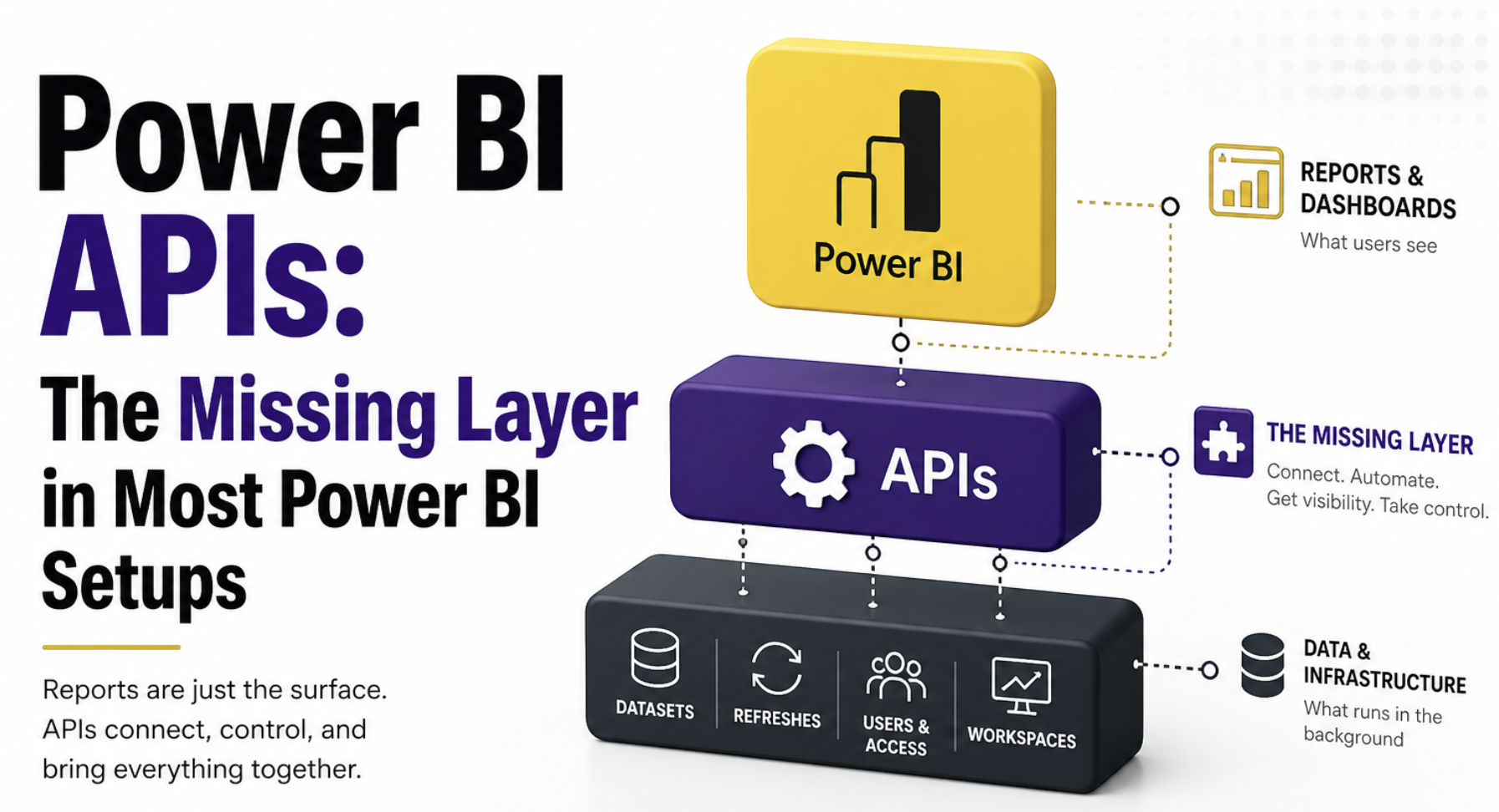

A lot of Power BI setups feel solid until you have to depend on them. On the surface, everything looks in place, reports are running, numbers are there, and users are happy(sometimes). But underneath, it often works more like a set of disconnected pieces than a system. The moment something breaks, whether it’s a failed refresh or a report behaving differently, you realize there’s no easy way to understand what happened or fix it quickly.

That gap doesn’t come from bad reports. It comes from everything around them being handled manually. Most teams focus on building the model and getting the visuals right, but very little attention goes into control, monitoring, or visibility across the setup. That missing layer is what starts to hurt as things scale, and that’s exactly where Power BI APIs come in.

In this post

We’ll focus on the points where most Power BI setups start to struggle once things scale:

- Why does refresh stop being predictable and turn into a manual task

- Why you don’t really know what’s being used across reports and datasets

- What actually breaks when you make a change

Power BI doesn’t stop at reports

Most of the effort in Power BI goes into building the model and getting the report and visuals right. That’s the visible part, and naturally, where most of the focus stays. But once things start to grow, the problems don’t come from there. They come from everything around it. How refresh is managed, how changes are tracked, and how you know what’s actually being used.

That’s the part most setups don’t really control. And that’s where APIs start to make sense. Not as an advanced feature, but as the layer that helps you manage what sits behind the reports. We will cover the three pain points in this post, but there is so much that you can achieve with APIs

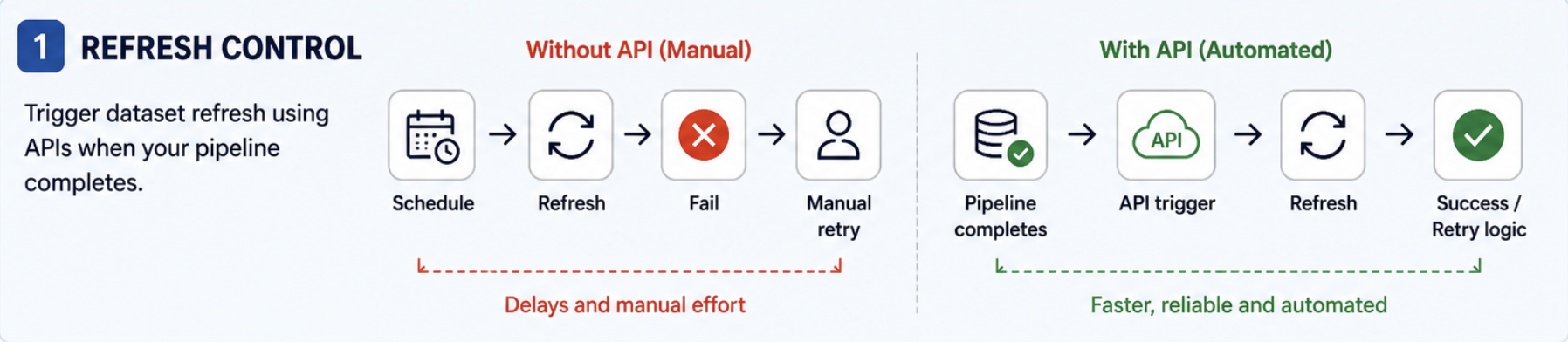

When refresh starts failing for no clear reason

Scheduled refresh works until datasets start depending on upstream jobs or other datasets. One process finishes late, another refresh starts too early, and suddenly, you are manually retrying refreshes instead of controlling them.

How can you implement it in the real world

- Pick one dataset that often fails or runs at the wrong time.

- Identify what needs to be finished before it refreshes; this could be a file load, pipeline, or another dataset.

- Instead of relying only on the schedule, trigger the refresh using the Power BI API. This can be done with tools like Power Automate, Azure Data Factory, or even a simple script.

- Set this trigger to run right after your upstream process completes.

- Add a basic check that looks at refresh status and retries once if it fails.

If you’re new to APIs, start with Power Automate. It hides most of the complexity and lets you focus on the logic first. Once that works, you can move to scripts if needed.

Pro tip: Don’t try to move everything to API-driven refresh at once. Start with one unstable dataset. Also, avoid triggering too many refreshes in parallel; capacity limits will hit you faster than you expect.

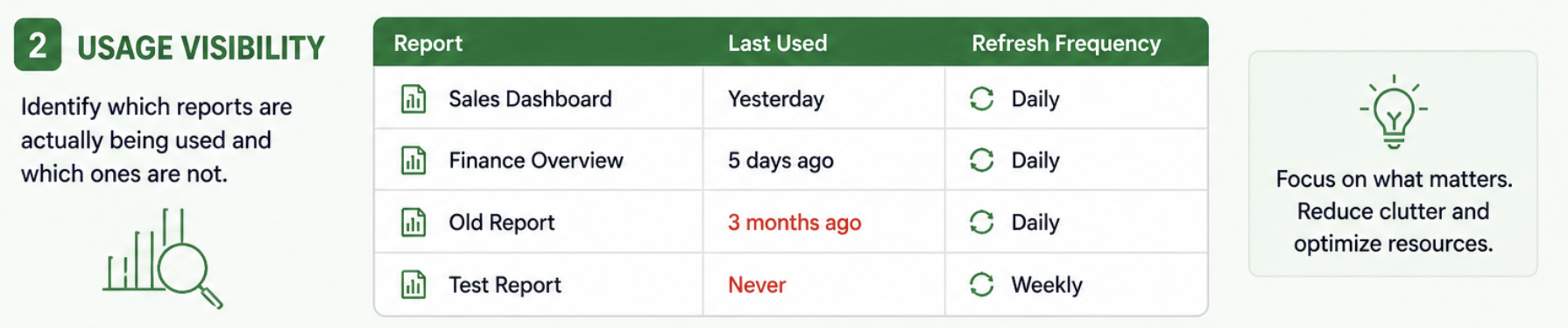

You don’t really know what’s being used

Most teams think they have a good sense of what’s important in their Power BI setup. There are a few “known” reports, some datasets everyone talks about, and a general assumption of what’s being used.

But when you actually try to validate it, things don’t add up. Some reports haven’t been opened in months but are still refreshing every day. Datasets that fail occasionally, but no one notices because the report isn’t used often. And then there are a few critical ones that people depend on, but you only find out how important they are when something breaks.

Without proper visibility, everything ends up being treated the same. You spend time maintaining things that don’t matter, and miss the ones that actually do.

How can you implement it in the real world

- Start by pulling a list of your workspaces and reports using Power BI APIs.

- You can do this using Power Automate or a simple script that calls the API and stores the result in a table.

- Capture basic fields like report name, workspace, and last activity.

- Add refresh history for datasets so you know how often they run.

- Build a simple Power BI report on top of this data to see usage vs refresh frequency.

Pro tip: Usage data is never perfect. Don’t treat it as the absolute truth. Use it to spot patterns, not make one-time decisions like deleting reports immediately.

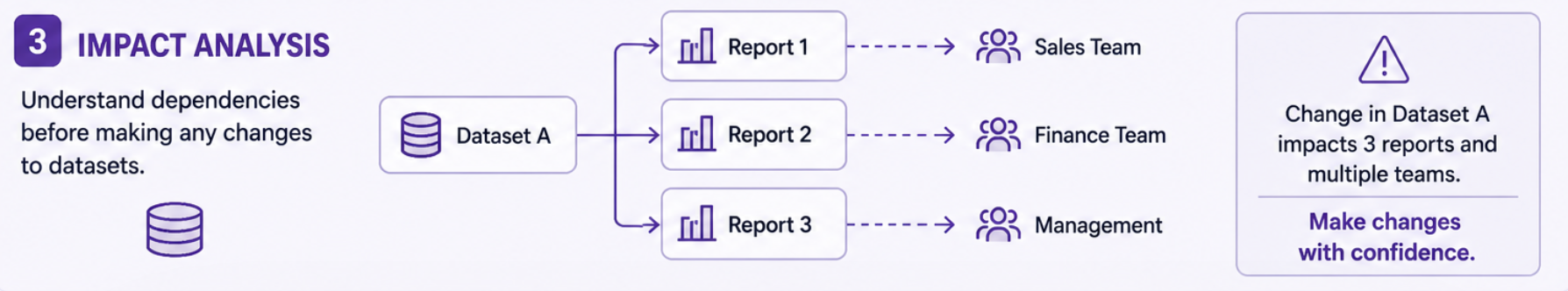

What breaks if I change this?

A dataset change looks small until it breaks multiple reports downstream. Without dependency visibility, every change involves guesswork. Now, guess if you have a stack of 100 datasets and reports, checking them one by one will be cumbersome.

How can you implement it in the real world

- Pick the dataset you’re planning to change.

- Use Power BI APIs to get a list of reports in your environment. You can do this using Power Automate, a script, or any tool that lets you call APIs.

- From that list, identify which reports are connected to your dataset. Even a basic match at the workspace level is a good starting point.

- Store this in a simple table with the dataset name, report name, and workspace.

- Before making the change, review this list and mark the important reports.

- After the change, validate only these reports instead of checking everything manually.

Pro tip: Don’t aim for a perfect dependency map in the beginning. Even a partial view is enough to avoid most surprises. Also, if you’re using shared datasets across workspaces, this becomes much more important. That’s usually where hidden dependencies show up.

Where can I start?

If you haven’t worked with APIs before, the easiest way to begin is to pick one use case and follow it through end to end. Start with something simple, like refresh control or usage visibility. Don’t try to cover everything. Just get one flow working properly.

A practical way to approach this:

- First, pull some data using the API. This could be a list of datasets, reports, or refresh history.

- Store that data somewhere simple, like a table or file.

- Build a small view on top of it so you can actually see what’s going on.

- Then add one action. This could be triggering a refresh, flagging failures, or identifying unused reports.

At this point, you’ve already gone beyond most setups. From there, you can build gradually. Add another dataset, extend the logic, or connect it to an existing process. The goal isn’t to automate everything; it’s to make one part of your setup more visible and controlled.

Other areas where APIs can help

The use cases above are usually where most teams start, but APIs don’t stop there. Once you begin working with them, you start noticing other gaps that are harder to manage manually. Things like ownership, monitoring, and workspace visibility don’t feel urgent at first, but they become important as your setup grows and more people start depending on it.

For example, pulling ownership details into one place avoids the usual back and forth when something breaks. Tracking refresh failures removes the need for manual checks. Listing workspaces and understanding access gives you a clearer picture of how your environment is structured. These are not always the first things to implement, but they are often the ones that make a setup easier to manage over time.

Closing thought

Most Power BI setups don’t break because of bad reports. They break because everything around the reports is being handled manually. At the beginning, that’s not a problem. A few datasets, a few reports, and things are easy to manage. But as things grow, the gaps start showing up. Refresh becomes unpredictable, ownership becomes unclear, and every change carries a bit of risk.

APIs don’t fix everything overnight, but they change how you approach these problems. You stop reacting to issues one by one and start building a setup where things are visible, controlled, and easier to manage. And that’s the difference. Not better reports, but a setup that actually holds together as it grows.

Comments

Join the discussion below (GitHub login required), or share your thoughts on LinkedIn . I’m most active there.